Representation Learning in Financial Time Series: The Quiet Revolution Reshaping Quantitative Finance

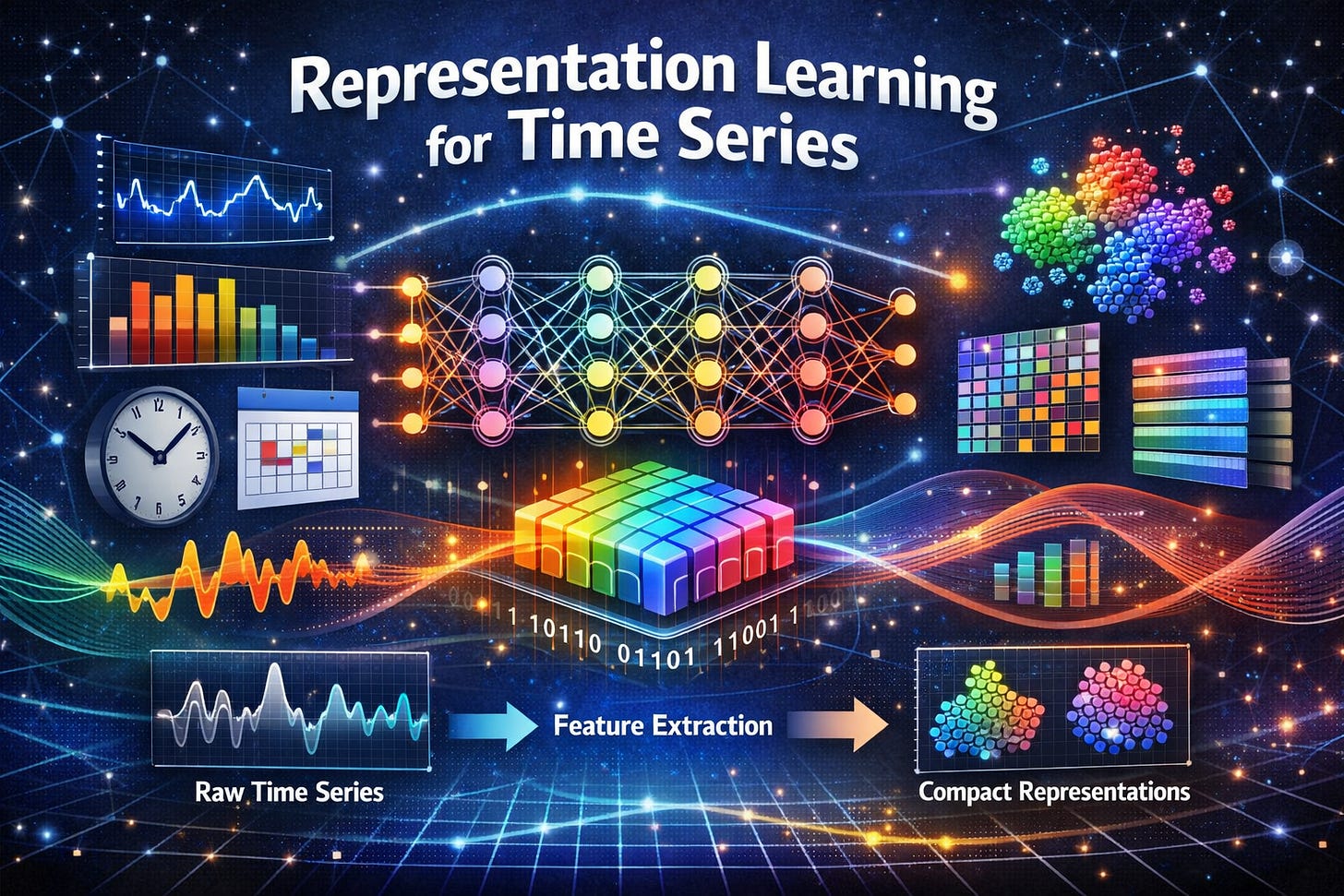

A Primer on Representation Learning in Time Series

The financial markets generate an astonishing volume of data every second. Price ticks, order books, trading volumes, earnings reports, sentiment signals from news and social media. it’s a torrent of information that would overwhelm any human analyst. For decades, quantitative finance relied on hand-crafted features and statistical models to extract signal from this noise. Momentum indicators, volatility measures, and factor loadings served as the building blocks of systematic trading strategies.

But something fundamental is changing. A new paradigm called representation learning is transforming how machines understand financial time series, moving from carefully designed features to learned abstractions that emerge organically from the data itself. This shift represents nothing less than a reimagining of what it means to model financial markets.

What Is Representation Learning, and Why Does It Matter for Finance?

At its core, representation learning is about teaching machines to discover useful features automatically. Rather than telling an algorithm that momentum matters, or that volatility clustering is important, we let the model learn what patterns in the data are predictive.

The idea originated in computer vision and natural language processing, where researchers found that neural networks trained on large datasets could learn rich, hierarchical representations of images and text. A model trained to recognize objects in photos doesn’t just memorize pixel patterns; it learns abstract concepts like edges, textures, shapes, and eventually semantic categories like “cat” or “car.” Similarly, language models don’t just memorize sequences of words; they learn grammatical structures, semantic relationships, and even rudimentary reasoning.

Financial markets, it turns out, exhibit their own rich structure. Price movements contain patterns at multiple timescales, momentum effects, mean reversion, volatility regimes, and cross-sectional relationships between assets. The question driving representation learning in finance is: can we teach machines to discover these patterns automatically, learning representations that capture the essential structure of markets without explicit human guidance?

The answer, increasingly, is yes.

The Challenge: Why Financial Data Is Different

Before diving into the methods, it’s worth understanding why financial time series present unique challenges for machine learning. This isn’t image classification or chatbot development; the problems are genuinely harder.

Low signal-to-noise ratio. Financial markets are notoriously noisy. The efficient market hypothesis suggests that prices incorporate available information quickly, leaving little exploitable signal. Even sophisticated models may achieve only slightly better than random accuracy on return prediction tasks, yet those small edges can be enormously valuable in practice.

Non-stationarity. Market dynamics change over time. The patterns that worked in 2015 may not work in 2025. Volatility regimes shift, correlations break down, and regulatory changes alter market microstructure. Any learned representation must be robust to these changes or continuously adapt to them.

Adversarial dynamics. Unlike natural phenomena, markets respond to the strategies used to predict them. When a pattern becomes widely known, it often disappears as traders exploit it away. This creates a moving target that differs fundamentally from static prediction tasks.

Limited data. Despite the apparent abundance of market data, the effective sample size for learning complex patterns may be quite small. Daily data for a single stock provides only about 250 observations per year. Even high-frequency data, while voluminous, contains fewer independent regime shifts or market cycles than its size might suggest.

Complex interdependencies. Assets don’t move in isolation. Stocks are connected through supply chains, shared investors, sector memberships, and macroeconomic exposures. Learning representations that capture these relational structures requires specialized architectures.

Stop Guessing. Start Trading with The Signal Beyond report 🚀

COT Data • Technical charts • Equity Arbitrage • Seasonality

The Architecture Zoo: How Neural Networks Learn Financial Representations

The past five years have seen an explosion of neural architectures applied to financial time series. Each brings different inductive biases, assumptions about what kinds of patterns are important.

Recurrent Neural Networks and Their Evolution

The earliest deep learning approaches to financial time series borrowed heavily from sequence modeling in NLP. Recurrent neural networks (RNNs), particularly Long Short-Term Memory (LSTM) networks and Gated Recurrent Units (GRUs), became workhorses for capturing temporal dependencies.

LSTMs address the vanishing gradient problem that plagued vanilla RNNs, allowing them to learn long-range dependencies in sequential data. For financial applications, this means capturing how information from weeks or months ago might still be relevant for today’s predictions. Research has demonstrated that LSTMs can capture long-term dependencies in financial time series and have shown strong performance in various forecasting tasks.

But recurrent architectures have limitations. They process sequences step by step, making them slow to train and difficult to parallelize. They also struggle with very long sequences, despite their theoretical ability to remember distant information.

The Transformer Revolution

The introduction of the Transformer architecture in 2017 marked a turning point. By replacing recurrence with attention mechanisms, Transformers can process entire sequences in parallel while learning to focus on the most relevant parts of the input.

The self-attention mechanism allows each element in a sequence to attend to all other elements, creating rich representations that capture dependencies at arbitrary distances. For financial time series, this means a model can learn that today’s price might depend on an event from six months ago, without needing to remember it through a long chain of recurrent computations.

Transformers have proven remarkably effective for time series forecasting. Models like Informer, Autoformer, and PatchTST have achieved state-of-the-art results on various benchmarks. Recent research has empirically confirmed that Transformers are indeed effective for time series forecasting, contrary to some earlier skepticism.

For financial applications, researchers have developed specialized variants. The “Stockformer” architecture, for instance, modifies the standard Transformer to handle the multivariate nature of stock prediction, using attention across both time and different market variables. The Modality-aware Transformer incorporates both numerical time series and textual information like earnings reports, using feature-level attention to weight different data sources appropriately.

Convolutional Approaches

Convolutional neural networks (CNNs), originally designed for images, have found surprising success in time series. One-dimensional convolutions can capture local patterns like short-term momentum or reversal, while dilated convolutions extend the receptive field to capture longer-range dependencies.

Temporal Convolutional Networks (TCNs) use stacked dilated convolutions to achieve receptive fields comparable to recurrent networks while maintaining parallel training. CNNs are particularly good at capturing local patterns for modeling short-term dependencies in financial data.

Hybrid approaches combine the complementary strengths of these architectures. Researchers have proposed combining CNNs (good at capturing local patterns) with Transformers (good at capturing global context) to model both short-term and long-term dependencies within financial time series.

Graph Neural Networks: Learning Relational Structure

Traditional approaches treat each asset independently, ignoring the rich relational structure of financial markets. Graph neural networks (GNNs) change this by explicitly modeling relationships between entities.

In a GNN framework, stocks become nodes in a graph, connected by edges representing relationships, supply chain links, shared investors, sector memberships, or statistical correlations. The network learns representations that incorporate information from neighboring nodes, capturing how movements in one stock might predict movements in related stocks.

A systematic review of graph neural network-based methods for stock market forecasting notes that GNNs can process non-Euclidean data in the form of knowledge graphs, helping to model complex hidden concepts that give them an edge over other approaches.

Recent work has combined GNNs with large language models to create even more sophisticated systems. One framework uses ChatGPT to infer network structures from financial news, then employs GNNs to create company embeddings for stock movement prediction.

Self-Supervised Learning: Teaching Models Without Labels

A major limitation of supervised learning in finance is the scarcity of labeled data. While we have abundant price data, meaningful labels like which stocks will outperform or when regime changes occur are rare and often noisy.

Self-supervised learning sidesteps this problem by creating learning tasks from the data itself. The model learns representations by solving pretext tasks that don’t require external labels, then fine-tunes these representations for downstream prediction tasks.

Contrastive Learning

Contrastive learning has emerged as one of the most powerful self-supervised approaches. The basic idea is simple: learn representations such that similar examples are close together while dissimilar examples are far apart. The challenge lies in defining what “similar” means for financial data.

Researchers have proposed novel contrastive learning frameworks to generate asset embeddings from financial time series. The approach uses the similarity of asset returns over many subwindows to generate informative positive and negative samples, employing a statistical sampling strategy based on hypothesis testing to address the noisy nature of financial data.

The key insight is that assets which move similarly over short windows likely share underlying characteristics worth capturing in the representation. By training a network to distinguish truly similar pairs from random pairs, the model learns embeddings that encode meaningful relationships.

These learned embeddings have proven useful for downstream tasks like industry sector classification and portfolio optimization, significantly outperforming traditional correlation-based approaches.

Masked Modeling and Reconstruction

Another self-supervised approach borrows from language models like BERT: mask out portions of the input and train the model to reconstruct them. For time series, this might mean masking future values and training the model to predict them, or masking intermediate values and learning to fill them in.

Contrastive Predictive Coding (CPC) combines these ideas, using an autoregressive model to predict future latent representations from past ones. The self-supervised signal comes from distinguishing true future representations from random samples. This approach has been applied to financial time series forecasting, using automated feature generation to improve downstream prediction models.

Time-Frequency Consistency

A particularly elegant approach for time series leverages the fundamental duality between time and frequency representations. The same signal can be represented either as a sequence of values over time or as a combination of frequency components. A good representation should capture both views.

The Time-Frequency Consistency (TF-C) framework positions time-based and frequency-based embeddings close together when they come from the same sample and far apart when they come from different samples. This provides a principled self-supervised signal that transfers effectively across different types of time series data. Research has shown that experiments against multiple state-of-the-art methods demonstrated that TF-C outperforms baselines by significant margins in various settings.

Generative Models: From Understanding to Creation

While discriminative models learn to predict labels from inputs, generative models learn the underlying distribution of the data itself. This deeper understanding can yield better representations and enable novel applications like synthetic data generation.

Variational Autoencoders for Factor Models

Traditional factor models decompose asset returns into exposures to common factors plus idiosyncratic noise. Deep learning extends this framework by learning nonlinear factors that better capture the complexity of financial markets.

FactorVAE integrates the dynamic factor model (DFM) with the variational autoencoder (VAE) in machine learning. The model uses a prior-posterior learning method that can effectively guide learning by approximating an optimal posterior factor model with future information. Importantly, the VAE’s latent space allows the model to estimate return variances in addition to predicting returns, crucial for risk modeling in noisy markets.

More recent work has extended this approach with recurrent architectures (RVRAE) that capture temporal dependencies while maintaining the probabilistic framework. Researchers have introduced RVRAE as an innovative dynamic latent factor model tailored to extract key factors from noisy market data efficiently, combining RNNs with variational autoencoders to address challenges in markets with low signal-to-noise ratios.

The latest evolution, FactorVQVAE, uses vector quantization to discretize the latent space. This enhances interpretability, as each code vector can be associated with distinct market states or risk regimes, while also addressing issues like posterior collapse that can plague continuous VAE representations.

Diffusion Models

Diffusion models, which have revolutionized image and audio generation, are now being applied to financial time series. These models learn to reverse a gradual noising process, generating samples by starting from pure noise and progressively denoising.

FTS-Diffusion applies diffusion models to generate synthetic financial time series while preserving important statistical properties (stylized facts) like fat tails and volatility clustering. The model uses a pattern recognition module to identify scale-invariant patterns, then generates new data conditioned on these patterns.

A particularly creative application uses diffusion models as denoisers for financial time series. By partially noising and then denoising the data, the model filters out high-frequency noise while preserving predictive signals. Research has demonstrated that diffusion model-based denoised time series significantly enhance performance on downstream future return classification tasks. Trading signals derived from the denoised data yield more profitable trades with fewer transactions.

Foundation Models: Toward General-Purpose Financial AI

The success of large language models like GPT-4 has inspired efforts to build foundation models for time series, large, pre-trained models that can be applied to diverse tasks with minimal adaptation.

Time Series Foundation Models

TimeGPT, TimesFM, and similar models are pre-trained on vast corpora of time series data across domains, weather, energy, traffic, and yes, finance. The hope is that by learning general temporal patterns, these models can transfer effectively to new datasets with little or no task-specific training.

The results in finance have been mixed. Research shows that generic time series pre-training does not directly transfer to financial domains, and that finance-native pre-training and data scaling are essential for realizing the full potential of foundation models in financial forecasting. Off-the-shelf time series foundation models perform poorly on financial data, while models pre-trained specifically on financial data deliver substantial forecasting and economic gains.

This finding has profound implications. It suggests that while the architectural innovations behind foundation models are valuable, financial data requires domain-specific pre-training to capture its unique characteristics.

Large Language Models for Finance

Perhaps the most exciting recent development is the application of large language models (LLMs) to financial prediction. At first glance, this seems counterintuitive, LLMs are trained on text, not numbers. But researchers have found clever ways to bridge this gap.

Time-LLM reprograms time series data into text prototype representations that are more natural for LLMs, augmenting the input with declarative prompts to guide reasoning. This allows leveraging the world knowledge and reasoning capabilities encoded in pretrained LLMs for time series tasks.

Research has shown that models which encode time series data to interact with a frozen LLM backbone have outperformed transformers on all benchmark datasets. However, their efficiency on complex datasets without clear seasonality or trend remains an open question, particularly relevant for financial data.

The multimodal potential is perhaps most exciting. LLMs can naturally incorporate textual information, news, earnings reports, analyst commentary, alongside numerical time series. This opens the door to models that understand not just what happened, but why it happened.

Research on LLMs in equity markets notes the increasing integration of unstructured textual data with structured financial indicators to improve stock prediction models and generate actionable market signals.

Conclusion: The Representation Matters

Representation learning is transforming quantitative finance by automating the discovery of predictive patterns in financial data. From recurrent networks to transformers, from autoencoders to diffusion models, the field has developed a rich toolkit for learning useful abstractions from raw market data.

But the technology is not magic. Financial markets present genuine challenges, low signal-to-noise ratios, non-stationarity, adversarial dynamics, that no architecture can fully overcome. The most successful applications combine sophisticated machine learning with deep domain expertise, rigorous backtesting, and careful attention to the many ways models can fail.

What’s clear is that the old paradigm of hand-crafted features and linear models is giving way to something new. The representations that future trading systems use won’t be designed by human engineers poring over price charts, they’ll emerge from algorithms processing millions of examples, discovering patterns that humans might never have thought to look for.

Whether this represents progress depends on your perspective. For quantitative researchers, it offers powerful new tools. For traditional fundamental analysts, it raises questions about the future relevance of human judgment. For regulators, it creates new challenges in understanding and overseeing increasingly opaque systems.

One thing is certain: the machines are learning the language of markets, one representation at a time.

Next time we encounter representation learning, we will go straight to coding!

Master Deep Learning techniques tailored for time series and market analysis🔥

My book breaks it all down from basic machine learning to complex multi-period LSTM forecasting while going through concepts such as fractional differentiation and forecasting thresholds. Get your copy here 📖!